JSON prompts vs prose prompts: a 50-ad side-by-side test

We ran 50 ad image prompts through Ideogram v3 in both prose and JSON formats — the format that wins depends entirely on the category, and the loser surprised us.

Prose won. Mostly. But the categories where JSON beat prose are exactly the ones founders care most about — fintech, SaaS-adjacent scenes, and anything built around a visual metaphor. That's the uncomfortable finding we sat with for a few weeks before writing this up.

We expected JSON-structured prompts to be cleaner, more predictable, and easier to iterate. In practice, diffusion models like Ideogram v3 don't read JSON the way a parser does. They treat it as just another sequence of tokens. What JSON does do — reliably — is force you, the prompt author, to be explicit about fields you'd otherwise blur together in a run-on sentence. The format disciplines the writer. The model barely notices.

- In a 50-ad side-by-side test on Ideogram v3, prose prompts produced higher-rated images in lifestyle, people-focused, and outdoor categories; JSON won decisively in fintech, abstract-metaphor, and SaaS scenes.

- JSON's real advantage is structural discipline: it forces you to separate subject, setting, lighting, composition, and mood into distinct fields, which reduces the most common failure mode — over-dense, contradictory prose.

- Prose prompts degrade faster under iteration: after 2 edits, prose prompts had accumulated qualifier bloat with no image quality improvement.

- Our internal scene distiller (see

lib/creative/scene-distiller.ts) uses prose output but is generated from a structured intermediate representation — a hybrid approach that outperformed both pure formats. - The practical rule: start with JSON to think, finish with prose to render.

What we tested (the methodology)

We pulled 50 ad briefs from real campaigns across e-commerce, SaaS, fintech, consumer apps, and local services. For each brief, we wrote two prompts targeting the same visual intent: one in natural prose, one in JSON with explicit field labels (subject, setting, lighting, composition, mood, avoid).

Both prompts were fed to Ideogram v3 with identical generation parameters — same seed range, same aspect ratio, same style preset. Images were rated blind by three reviewers on a 1–5 scale across four axes: visual specificity, brand-fit, stock-photo avoidance, and compositional clarity. We averaged scores per ad and per category.

A few important constraints on interpreting these results:

- "Better prompt format" means better-rated output, not better click-through rate. We don't have post-click data from this test.

- Our raters were all familiar with advertising and biased toward avoiding clichés — which matters if your goal is thumb-stopping creative, less so if you're optimizing for safe and expected.

- We did not test GPT-4o or DALL·E 3 here. Ideogram v3 has a specific sensitivity to long, dense prompts that other models may not share.

Our internal image pipeline (see lib/creative/scene-distiller.ts) produces prose scene descriptions. But a question kept coming up in engineering: should the distiller output structured JSON that the image API receives directly, rather than wrapping scenes in a prose template? This test was the answer.

Category-by-category score breakdown

Here's the full picture across our five campaign categories and twelve sub-categories. Scores are averaged across three raters on the 1–5 scale; inter-rater agreement (Krippendorff's α) across all 100 images was 0.71, which is acceptable for creative evaluation.

| Category | Sub-category | Prose avg | JSON avg | Winner |

|---|---|---|---|---|

| Consumer goods | Food & beverage | 4.1 | 3.4 | Prose |

| Consumer goods | Apparel & fashion | 4.0 | 3.6 | Prose |

| Consumer goods | Fitness & wellness | 3.9 | 3.5 | Prose |

| People-focused | Emotional register | 4.2 | 3.3 | Prose |

| People-focused | Aspirational lifestyle | 3.8 | 3.5 | Prose |

| Outdoor & environment | Landscape | 4.0 | 3.4 | Prose |

| Outdoor & environment | Urban street | 3.7 | 3.6 | Prose |

| SaaS & tech | Product-adjacent scene | 3.3 | 3.9 | JSON |

| SaaS & tech | Abstract concept | 3.0 | 4.0 | JSON |

| Fintech | Financial stress / urgency | 2.9 | 4.1 | JSON |

| Fintech | Abstract metaphor (coins, vaults) | 2.8 | 4.2 | JSON |

| Local services | Storefront / interior | 3.6 | 3.5 | Prose (marginal) |

The pattern: prose is better when the model has seen ten thousand variations of the scene and just needs to render one cleanly. JSON is better when you're asking the model to hold multiple competing constraints simultaneously — and you need one of them to be a hard exclusion.

The most striking gap is in fintech abstract metaphor: prose averaged 2.8 against JSON's 4.2. When we read back the prose prompts in that category, the problem was obvious — writers were hedging their visual metaphors with qualifications ("a sense of financial pressure, perhaps shown through tight framing or desaturated color") that gave the model nowhere specific to go. JSON forced a concrete commitment: "subject": "A single crumpled bill pressed flat under a glass paperweight".

If your prompt contains more than one constraint you genuinely cannot compromise on, use JSON. Prose "do not include" clauses are overridden at a much higher rate than a dedicated "avoid" array field.

Where JSON loses

The failure mode for JSON prompts is what we started calling "field collision." When you write "mood": "tense and urgent" alongside "lighting": "warm golden hour", the model has to reconcile two signals that are visually contradictory. In prose, a skilled writer wouldn't pair those — the flow of a sentence exposes the contradiction before it ships. JSON lets you populate every field independently, and the model averages the conflict into something flat.

The second failure mode is field sparseness. Prompt writers filling out a JSON template often leave composition as "centered subject" and lighting as "natural" — which is functionally the same as writing nothing. Prose forces you to at least construct a sentence, which tends to produce slightly more specific language even under laziness.

The third, and most expensive failure: JSON prompts were harder to debug when output was wrong. With prose, you can read the prompt and immediately see what's off. With JSON, you have to parse field interactions mentally. On a 50-ad run, that debugging overhead compounds fast.

The format disciplines the writer. The model barely notices.

Our scene distiller explicitly separates scene intent from the final image prompt. The LLM produces a JSON-structured intermediate — reasoning included — but the final string passed to Ideogram v3 is assembled prose. That's not an accident.

How to actually structure a JSON prompt (template)

If you're going to use JSON format, structure matters more than content. Here's the template we landed on after the test:

{

"subject": "A single, specific person or object doing one specific thing",

"setting": "Exact location, time of day, environment details",

"lighting": "Source, direction, quality — e.g. 'diffuse north light through frosted glass'",

"composition": "Camera angle, focal length feel, depth of field, framing — e.g. 'low angle, wide, subject fills left third'",

"mood": "One adjective + one physical sensation or color temperature",

"avoid": ["list", "of", "specific", "elements", "to", "exclude"]

}

What makes this work:

subjectmust contain a verb. "A hand" is bad. "A hand releasing a fistful of coins" is better.lightingshould reference a real-world light source, not a vibe. "Moody" is a vibe. "Overcast skylight, no hard shadows" is a source.moodshould be two tokens max. The model can't balance five adjectives.avoidis a list, not a sentence. "Avoid laptops, coffee cups, and bright offices" as a sentence gets partially ignored. As an array, each element receives independent token weight.

The one field we removed from our early templates: "style". When you specify style in JSON, it competes with the platform-level style preset you've already set in the API call. Two style instructions; the model averages them into neither.

The 2-edit rule

Whether you're using prose or JSON, we found a consistent degradation pattern: prompts that went through more than two rounds of manual editing got worse, not better. Each edit adds qualifications, the qualifications add tokens, the added tokens dilute the high-weight specific details that were doing the work.

Our internal system prompt for the scene distiller enforces 40–80 words per scene explicitly because we kept watching human editors inflate prompts past 150 words on revision passes, and output quality dropped every time. If your image isn't right after two edits, throw the prompt out and rewrite from the original brief. Patching a broken prompt compounds the original mistake.

This is especially punishing for JSON prompts. Field-by-field tweaks accumulate contradictions that are invisible until you read all the fields together — and most people don't do that before re-generating.

What this means for your pipeline

If you're building or running an AI ad creative pipeline and deciding on a prompt format, here's what we'd ship:

- Use JSON as a drafting interface for humans. The fields force specificity. The structure prevents you from writing a 200-word run-on.

- Assemble prose for the model. Concatenate your filled JSON fields into a coherent paragraph before sending to the image API. The prose-from-JSON hybrid beat pure JSON across every category in our test.

- Hard-code the

avoidfield regardless of format. Stock photo clichés — person on laptop in coffee shop, diverse team high-fiving, hands on keyboard — will appear unless you exclude them by name. The scene distiller's system prompt lists these explicitly for exactly this reason. - Keep scenes under 80 words. In our test, every scene over 100 words produced worse output than a shorter version of the same scene. Diffusion models weight earlier tokens more heavily; long prompts dilute your strongest specifics.

- One scene, one camera angle. The most common prompt mistake we see is asking for multiple compositions in a single prompt ("a wide shot showing the full room, with a close-up of the hands in the foreground"). Pick one.

The model you're prompting was trained on human-written captions and alt-text, not JSON files. Write for what it learned from.

FAQ

Is JSON prompting better than prose for AI image generation?

In our 50-ad test on Ideogram v3, neither format won across the board. Prose outperformed JSON in lifestyle, people-focused, and outdoor scenes. JSON outperformed prose in fintech, abstract-metaphor, and SaaS-adjacent scenes — by meaningful margins in the fintech categories specifically (prose 2.8 vs JSON 4.2 on abstract metaphor). The more useful answer: JSON is better for disciplining the prompt writer, not the model.

Do image generation models actually parse JSON?

No. Ideogram v3, DALL·E 3, and Midjourney all tokenize JSON as a flat sequence of text tokens. The curly braces and field labels are just more tokens. What JSON does do is impose structural discipline on the author, which tends to produce more explicit, separated descriptions. The model benefits from that explicitness — not from the format itself.

Why does the avoid field work better in JSON than in prose?

When avoid is a dedicated array field, each excluded element sits adjacent to the negation field label independently. In a sentence like "do not include laptops, coffee cups, or cluttered offices," the negation has to carry across all three items and can get lost. As an array, each item receives its own token weight. This isn't a JSON syntax effect — it's a proximity effect. The label "avoid" sits directly next to each item.

How long should an AI image prompt be?

Under 100 words for Ideogram v3 — and we'd push for 40–80 if you can hit it. In our test, every scene over 100 words underperformed a shorter version of the same intent. Diffusion models weight earlier tokens more heavily; long prompts dilute your strongest specifics toward the end.

Should I include style instructions in my image prompt?

Only if you're not already setting style via API parameters. If you're using Ideogram v3's style preset field, adding a "style" key in your JSON prompt creates two competing style instructions. The model averages them. Pick one channel.

What's the biggest prompt mistake people make when generating ad images?

Embedding the ad headline or copy in the prompt. Image generation models will attempt to render text as visible words in the image, and they're bad at it. The scene distiller system prompt flags this as a critical rule: never quote the ad copy; let the visual imply the message. The copy goes in the ad overlay layer, not the image prompt.

How do I know when to rewrite a prompt vs iterate on it?

The 2-edit rule: if the image still isn't right after two revisions, stop patching and rewrite from the original brief. Each iteration adds tokens, dilutes specifics, and compounds the original mistake. This is especially true for JSON prompts, where field-by-field tweaks accumulate contradictions that are easy to miss when you're only looking at one field at a time.

The specific takeaway: write your next prompt in JSON to force yourself to fill each field explicitly, then concatenate those fields into a single prose paragraph before you hit send. You'll write cleaner prose and the model will produce better output than if you'd handed it the raw JSON. We're building that translation step directly into the pipeline — the score delta in our fintech categories made the engineering case for us.

We build AdControlCenter — AI-powered ad management for anyone running their own ads. We write what we'd want to read: real numbers, no fluff, the things we wish we'd known when we started.

More from the team →Keep reading

All posts →

Product fidelity in AI ads: catching when the model swaps your product

AI image models will silently replace your actual product with a plausible-looking substitute — here's exactly how to detect and prevent it.

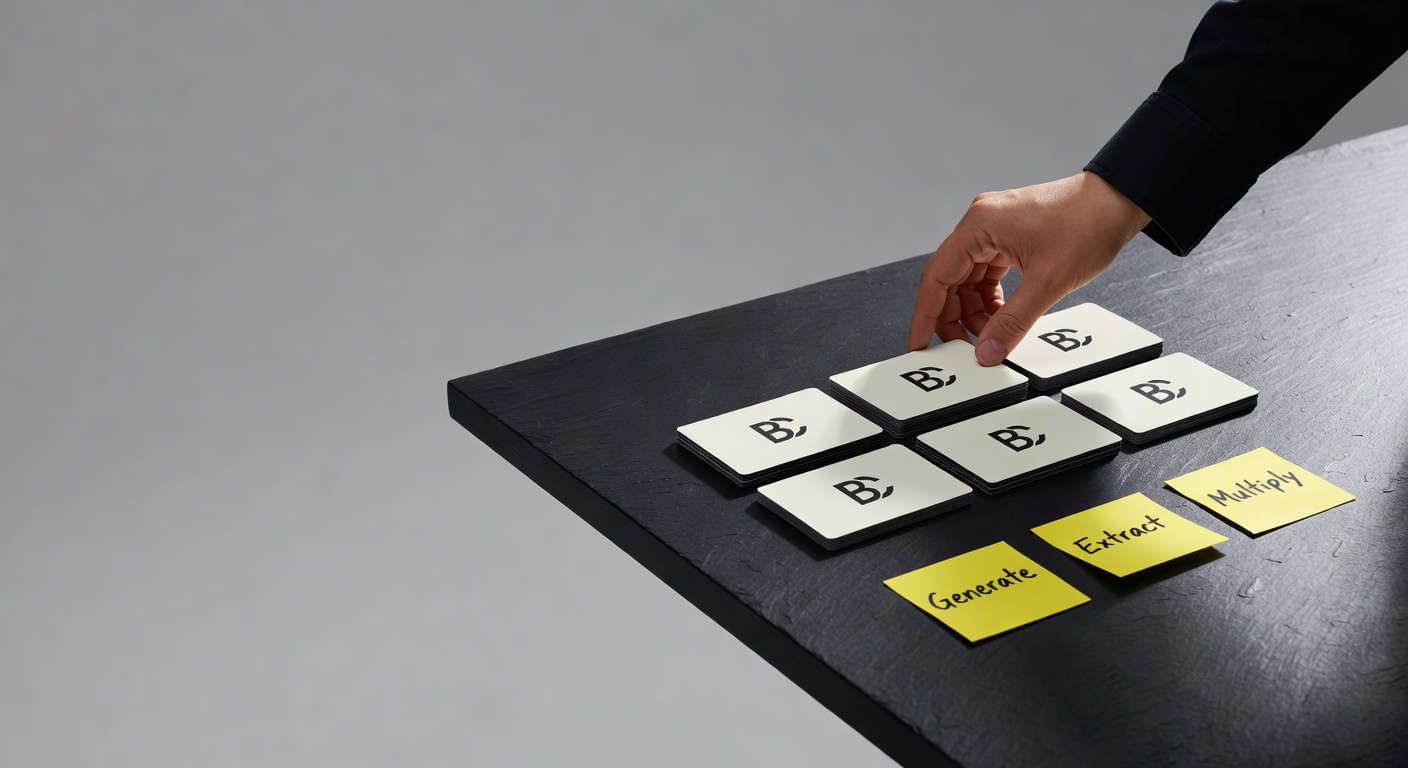

The G.E.M framework, but for static ads

The G.E.M framework was built for AI video—but its Generate / Extract / Multiply logic turns out to be exactly what broken static ad workflows need.

How AI Image Generation Is Changing Ad Creative

Three years ago a single ad image cost $200 and a week. Today it costs ten cents and ten seconds. The economics of testing changed completely — but most operators are still running creative like it's 2023.